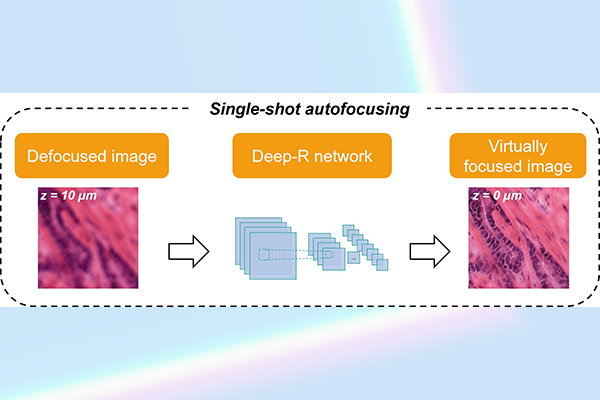

Figure Caption: UCLA researchers created a deep learning-based autofocusing technique (termed Deep-R) for bringing microscopy images into focus much faster than other approaches.

Optical microscopes are frequently used in biomedical sciences to reveal fine features of specimen, such as human tissue samples and cells, forming the backbone of pathological imaging for disease diagnosis. One of the most critical steps in microscopic imaging is autofocusing so that different parts of a sample can be rapidly imaged all in focus, featuring various details at a resolution that is smaller than one millionth of a meter. Manual focusing of these microscope images by an expert is impractical, especially for rapid imaging of a large number of specimens, such as in a pathology laboratory that processes hundreds of patient samples every day.

UCLA researchers have created a new image autofocusing technique to digitally bring a given microscopy image into focus without the use of a special microscope hardware or equipment during the image acquisition phase. This new approach is based on deep learning, where an artificial neural network is trained to take a single defocused image as its input to rapidly create an in-focus image of the same sample, without a need for any prior knowledge of the defocus distance or any assumptions regarding the image blur function.

Published in ACS Photonics, a journal of the American Chemical Society, UCLA team has demonstrated the success of this deep learning-based autofocusing method on human samples including breast, ovarian and prostate tissue sections, imaged with fluorescence and brightfield microscopes. Compared with standard autofocusing algorithms, UCLA’s neural network enhanced the autofocusing speed of a microscope by 15-fold, resulting in major time savings, which is especially significant for pathology laboratories that need to rapidly image large numbers of tissue samples. Simple to implement and purely computational, this new deep learning-enabled autofocusing approach can be applicable to a wide range of microscopes since it requires no hardware modifications to the imaging system.

This research is led by Professor Aydogan Ozcan, Volgenau Chair for Engineering Innovation and a Chancellor’s Professor of electrical and computer engineering at UCLA, USA. The other authors include graduate students Luzhe Huang, Yilin Luo and Professor Yair Rivenson, all from electrical and computer engineering department at UCLA. Ozcan also has UCLA faculty appointments in bioengineering and surgery, and is the associate director of the UCLA California NanoSystems Institute (CNSI) and an HHMI professor.

Link to paper: https://pubs.acs.org/doi/10.1021/acsphotonics.0c01774