To help identify diseases, pathologists typically manually apply colored dyes, or stains, to label tissue samples. The stains help highlight microscopic structures and provide a visible color contrast between healthy tissue and anomalies.

But the process is time-intensive and expensive, requiring sophisticated laboratory equipment and trained personnel. It also changes the tissue sample, which prevents scientists from conducting additional molecular analysis if they need to.

Now, Chancellor’s Professor Aydogan Ozcan and Adjunct Professor Yair Rivenson, both of electrical and computer engineering, have pioneered a way to use deep learning—a subset of machine learning—to digitally and rapidly stain tissue samples without the need for expert human intervention.

It requires only a standard microscope and laptop computer, and it could be especially impactful for making medical diagnoses in developing nations and other areas with limited resources.

The research was published in the journal Nature Biomedical Engineering.

The AI-based technology’s speed is what sets it apart from today’s more widely used approaches.

“Imagine a surgeon’s need to rapidly assess when they have reached non-cancerous tissue while removing a tumor,” Ozcan said. “Instead of manually staining tissue during the course of the surgery, virtual staining can provide an answer much faster.”

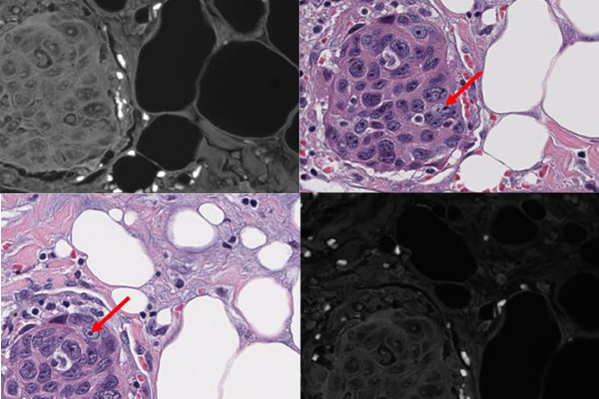

By using thousands of stained tissue images used for training, a one-time effort, the neural network—a type of machine learning method inspired by the human brain—is able to digitally mimic the staining process. It is then trained to transform a microscopic image of the naturally present fluorescent compounds in unstained tissue into an equivalent image—but with a digital stain.

The result is an exact replica of the image of a stained tissue sample that a technician would prepare in a lab—generally taking one to two hours depending on the stain type—which only takes minutes to digitally generate using the neural network.

“Virtual staining gives us capabilities that are nearly impossible to achieve today, such as simultaneously staining the same tissue sample with multiple types of stains for a more accurate diagnosis,” Rivenson said.

An added bonus is that the technique could eliminate variations in staining quality—which can be caused when different technicians manually stain samples—and reduce the odds of misdiagnosing or missing anomalies.

The engineers demonstrated the method on sections of the salivary gland, thyroid, kidney, liver and lung, and the technique’s accuracy was independently evaluated by board-certified pathologists in a blind study, in which the pathologists did not know which images were stained by technicians and which were virtually stained by the neural network. The study revealed no clinically significant difference in the staining quality and medical diagnoses between the two sets of images.

The research team includes UCLA graduate students Hongda Wang, Kevin de Haan and Zhensong Wei. Clinical validation was directed by Dr. W. Dean Wallace of the Department of Pathology and Laboratory Medicine at the David Geffen School of Medicine at UCLA.

The research was supported by the Koc Group, the National Science Foundation and the Howard Hughes Medical Institute.