News | January 19, 2026

Quantum Light Matter Cooperative Unveils First-Ever Mode-Locked XFELS Experimental Demonstration

The Quantum Light Matter Cooperative (QLMC) in collaboration with colleagues at Switzerland’s Paul Scherrer Institute recently unveiled the first-ever experimental demonstration of a mode-locked XFELS, which produced a coherent, structured train of attosecond pulses in the X-ray regime with unprecedented precision control over light at the attosecond scale, opening new frontiers for probing ultrafast electron dynamics.

This scheme was first proposed by Thompson and McNeil in 2008 but the complexity of these sophisticated large-scale instruments makes such incredible achievements slow to materialize.

QLMC looks forward to taking on this fascinating demonstration to further physical coherent regimes and cost-effective, compact solutions.

Please see the LinkedIn post.

Distinguished Professor Yahya Rahmat-Samii Delivers Master Talk at IEEE MAPCON 2025 in Kochi, India

Distinguished Professor Yahya Rahmat-Samii delivered an hour-long Master Talk entitled “From Darwin to Swarms to Brainstorms: Evolutionary Optimization for Modern Electromagnetic Engineering Design” at the 2025 IEEE Microwave Antennas and Propagation Conference (MAPCON). The conference was held in Kochi, India, from December 14–18, 2025, and was jointly sponsored by the IEEE Antennas and Propagation Society (AP-S), the IEEE Microwave Theory and Techniques Society (MTT-S), the Indian Institute of Space Science and Technology, the Indian Space Research Organization (ISRO), and several other organizations.

The Master Talk highlighted the rapidly expanding role of advanced computational techniques in the optimal design of complex engineering systems, emphasizing the growing importance of Evolutionary Optimization (EO) methodologies over traditional brute-force approaches. It focused on nature-inspired algorithms, including Genetic Algorithms (GA), Particle Swarm Optimization (PSO), and Brain Storm Optimization (BSO), explaining their underlying principles and recent advances. The presentation demonstrated the application of these techniques to a wide range of electromagnetic engineering problems, spanning space and planetary missions, medical and wireless devices, multifunctional and adaptive antennas, metamaterials, and nanostructures, while also assessing their practical advantages and limitations. In addition, it discussed the integration of machine learning and AI platforms to accelerate computational processes and further enhance optimization efficiency.

The conference attracted more than 1,800 participants from across the globe. Prof. Rahmat-Samii’s Master Talk was widely regarded as one of the highlights of MAPCON 2025 and was attended by the Presidents of both IEEE AP-S and IEEE MTT-S, along with numerous distinguished Indian dignitaries, registered delegates, and young professionals. The accompanying images capture moments from his presentation and the appreciation ceremony that followed, during which he was honored with a model of an Indian satellite featuring a reflector antenna inspired by his seminal publications. The images also include Dr. C. Saha, Chair of the MAPCON Conference. Additionally, Prof. Rahmat-Samii was invited to present his “15 Life Lessons” to all conference participants, and the presentation was very well received.

In his post-conference email dated December 24, 2025, Dr. Saha wrote, “Your presence at MAPCON 2025 made it a special, glorious event. Your talk was brilliant, which is not a surprise as we all know that you are a legend of our field. Once again, I extend my most sincere thanks and regards to you for being the center of attraction in MAPCON 2025.”

UCLA CHIPS Hosts Researchers from South Korea’s Ministry of Science and ICT, Discuss Collaboration

A delegation from South Korea’s Ministry of Science and ICT (MSIT) and the Electronics and Telecommunications Research Institute (ETRI) recently visited the UCLA Center for Heterogeneous Integration and Performance Scaling at the University of California, Los Angeles to explore potential collaboration in advanced semiconductor packaging and heterogeneous integration. The visit included laboratory tours and technical discussions on 3D integration, wafer-level packaging, and system-level scaling, with both sides expressing strong interest in joint research initiatives, researcher exchanges, and long-term U.S.–Korea cooperation in semiconductor innovation.

Ozcan Lab Develops Model-Free Reinforcement Learning-Based Training Framework for Diffractive Optical Processors

UCLA’s Ozcan Lab recently published a new journal article that introduces a model-free in situ training framework for diffractive optical processors, driven by a reinforcement learning algorithm. Rather than relying on a digital twin or the knowledge of an approximate physical model, the system learns directly from real optical measurements, optimizing its diffractive features on the hardware itself. The approach could expand to photonic accelerators, nanophotonic processors, adaptive imaging systems, and real-time optical AI hardware.

Please see the paper and the news release.

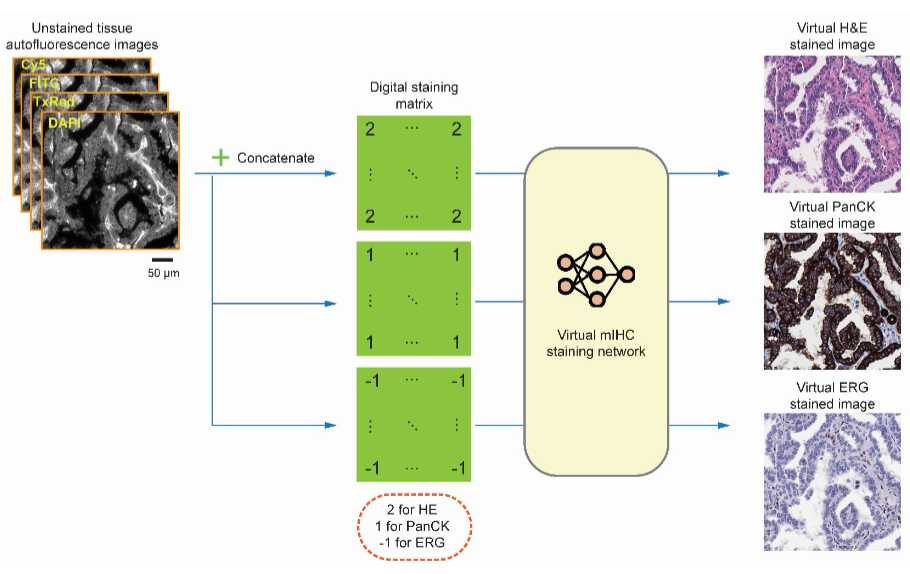

Ozcan Lab Develops Deep Learning-Based Virtual Multiplexed Immunostaining

Ozcan Lab developed a deep learning-based method that can digitally generate multiple immunohistochemical stains from a single, unstained tissue section. The approach enables accurate assessment of vascular invasion-a key indicator of cancer aggressiveness-without the need for conventional chemical staining procedures.

Please see the paper and the news release.

UCLA CHIPS Celebrates 10 Years at the Fifth Annual CHIPS Symposium

The UCLA Center for Heterogenous Integration and Performance Scaling (CHIPS), led by Professor Subramanian Iyer, hosted an alumni dinner in Milpitas, CA after the IEEE International Electron Devices Meeting (IEDM). Professor Iyer shared that it was a pleasure to meet our alumni, learn how they are doing, and reconnect.

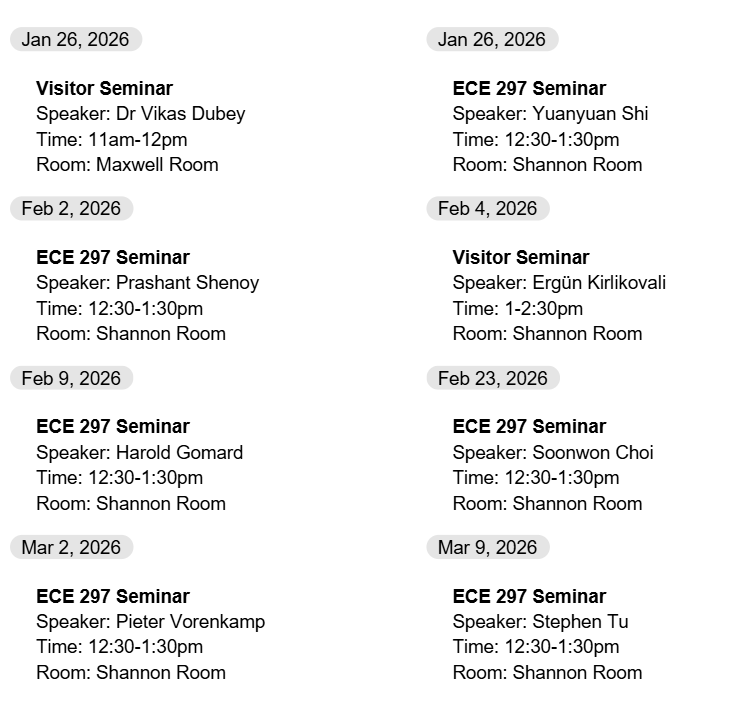

Events/Seminars

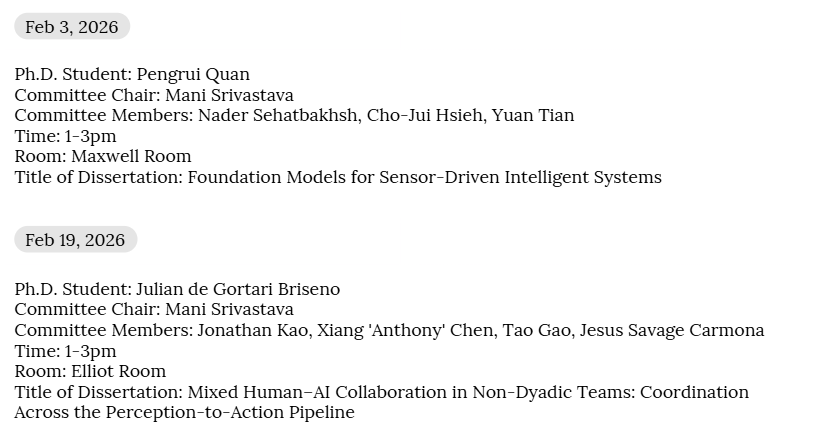

Upcoming PHD Defenses

Student Organizations

Invitation to Mentor: Connect with the Next Generation of UCLA ECE Students

The UCLA chapter of Eta Kappa Nu (HKN) is launching its Winter Mentorship Program and is looking for alumni to help bridge the gap between academic life and industry reality.

We are seeking former UCLA engineering graduates to:

- Provide 1:1 advice to current students.

- Share insights on current industry trends.

- Help students understand what it takes to thrive in the field today.

We are also planning an in-person get-together for mentors and mentees in the Spring (food provided!) to help build community and connect face-to-face.

Whether you are working in hardware, software, or research, your experience is invaluable to the next generation of Bruins.

How to Join:

If you are willing to mentor a student this Winter Quarter, please fill out this brief form.

Job Opportunities

Johns Hopkins University Data Science and AI Institute Postdoctoral Fellowship Program

The Johns Hopkins University Data Science and AI Institute welcomes applications for its Postdoctoral Fellowship program, seeking disciplinarily diverse scholars to advance foundational methods of data science and artificial intelligence, and their applications across all areas, including science, health, medicine, the humanities, engineering, policy, governance, democracy, and ethics.

The postdoctoral fellows will join the Institute’s growing research community and collaborate with its broad array of faculty members. Fellows will form an interdisciplinary cohort of outstanding scholars who will contribute to the research, education, policy, and outreach goals of the Institute.

Program Benefits:

- Competitive salary

- Academic travel stipend

- Mentoring by DSAl-affiliated faculty and collaborators

- Access to compute resources

- Participation in center-supported educational initiatives and projects

Positions are for 2 years with the possibility of an extension pending satisfactory performance and continued funding.

While candidates who complete their applications by the January 23 deadline will receive full consideration, we may consider applications submitted after that date until all positions have been filled.

Please see the application and website.

For any questions about the program, please contact DSAI-Academics@jh.edu.

Cellular SOC Design Verification Engineer – Entry Level at Apple

Do you have a passion for invention and self-challenge? This position gives you an opportunity to be a part of one of the most cutting edge and key projects that Apple’s Silicon Engineering Group has embarked upon to-date. As a Design Verification Engineer on our team, you’ll be at the center of the verification effort within our silicon design group responsible for crafting and productizing state-of-the-art Cellular SoCs!

You will have the opportunity to contribute to the verification effort of a set of complex SOCs delivering the Cellular solution. You will integrate multiple sophisticated IP level DV environments, craft highly reusable best-in-class UVM based test bench, implement effective coverage driven and directed test suites, deploy new tools and methodologies to deliver chips that are right-first-time. By collaborating with other product development groups across Apple, you can push the industry boundaries of what cellular systems can do and improve the product experience for our customers across the world!

Minimum Qualifications:

BS in EE or CS is required

Object Oriented Programming

Coursework in Digital Design

Preferred Qualifications:

MS in EE or CS

Coursework in Computer Architecture, Networking Protocol

Should be a great teammate with excellent communication and problem-solving skills and the

desire to seek diverse challenges.

Programming experience in SystemVerilog, Python, C++

Application details:

- Candidates must be graduating this December

- Please include your current GPA with your resume

- Please send resume directly to Junhui Lou at j_lou@apple.com

Carnegie Mellon University – ECE Faulty Position

The Department of Electrical and Computer Engineering at Carnegie Mellon University invites applications for tenure-track, research-track, and teaching-track faculty positions at all ranks on our Pittsburgh campus. Although we welcome applicants in all areas of ECE, this year we are particularly interested in candidates whose research focuses on machine learning and artificial intelligence.

Our top-ranked department offers a highly collaborative environment, world-class research infrastructure, and strong support for interdisciplinary scholarship. Applicants should hold a PhD in a relevant field. Application review will begin shortly and will continue until the positions are filled.

Faculty application link: https://apply.interfolio.com/174597

When applying, please also email Andrea Zanette at zanette@cmu.edu

Hiring PhD Interns/FTE for Training and Inference of Foundation Models in AWS Neuron Science

From Amazon Web Services Neuron Science:

Our team in AWS AI Research is looking for a couple of PhD FTE/interns passionate about advancing fundamental research in foundation models. Our algorithm team focuses on developing innovative solutions in the topic of Algorithm-System for LLMs (efficient model design, training, inference) LLM-for-System (LLMs and agents that write optimized kernels). These efforts require both a strong theoretical foundation and a practical understanding of topics such as foundation modeling, distributed optimization, model compression, and machine learning systems. We work closely with system researchers to ensure our algorithmic solutions are compatible with system and hardware constraints, training and testing end2end in accuracy and speed. At the same time, the algorithmic innovation we bring can contribute improving systems and future hardware feature to incorporate.

Basic Qualifications

- Hands-on experience with large language models (LLMs), including:

- Model architecture, datasets, evaluation metrics, and pre-/post-training techniques

- Distributed training techniques, such as parallelism, mixed-precision methods, optimizers, stochastic rounding, and IO-aware training

- Compression and inference strategies, including pruning, quantization, and speculative decoding

- Post-training of SFT and RL to enhance reasoning capability

- Leveraging LLM to improve ML system and kernel programming

- Eager to learn effective system kernel implementation if needed

- Enrolled PhD student in Computer Science, Electrical Engineering, Mathematics, or a related field

- First authored papers in top venues like NeurIPS, ICLR, ICML.

Preferred Qualifications

- Strong theoretical background, such as distributed optimization, compression, or RL

- Strong hands-on experience on pre-training (e.g., low-precision training) or post-training (e.g., SFT, RLHF, GRPO)

- Proven experience with foundation models, including LLMs, multi-modal models

- Experience in establishing scaling laws for training and inference time-scaling

- Knowledge of distributed systems and experience developing low-level, high-performant kernels

Selected Publications

This year, our team has published multiple papers that bridge the gap between theory and practice in LLM research. We also actively collaborate with system and hardware engineers across the AWS organization to deliver tutorials. Below is a list of recent publications:

- [Under review] MuonBP: Faster Muon via Block-Periodic Orthogonalization Arxiv

- [Under review] Scaling Laws Meet Model Architecture: Toward Inference-Efficient LLMs Arxiv

- [Under review] TritonRL: Training LLMs to Think and Code Triton Without Cheating Arxiv

- [Under review] Demystifying Transition Matching: When and Why It Can Beat Flow Matching Arxiv

- [Under review] Not-a-Bandit: Provably No-Regret Drafter Selection in Speculative Decoding for LLMs Arxiv

- [IJCAI 2025] Tutorial: Scaling LLM Training: Efficient Pre-training & Fine-tuning on AI Accelerators

- [AISTATS 2025] Training LLMs with MXFP4 Paper

- [AISTATS 2025] Stochastic Rounding for LLM Training: Theory and Practice Paper

- [ICML 2025] ProxSparse – Regularized Learning of Semi-Structured Sparsity Masks for Pretrained LLMs Paper

- [ICML 2025] RoSTE – An Efficient Quantization-Aware Supervised Fine-Tuning Approach Paper

- [ICML 2025] WaveletToken – Enhancing Foundation Models for Time Series Forecasting via Wavelet-based Tokenization Paper

- [ICML 2024] Collage: Light-Weight Low-Precision Strategy for LLM Training Paper

- [ICML 2024] Variance-reduced Zeroth-Order Methods for Fine-Tuning Language Models Paper

- [ICML 2024] MADA: Meta-Adaptive Optimizers through Hyper-gradient Descent Paper

- [ICML 2024] EMC^2: Efficient MCMC Negative Sampling for Contrastive Learning with Global Convergence Paper

- [NeurIPS 2024] Posterior Sampling with a Diffusion Prior for Online Learning Paper

- [IEEE BigData 2024] HLAT: High-quality Large Language Model Pre-trained on AWS Trainium Paper

- [KDD 2024] Survey: Inference Optimization of Foundation Models on AI Accelerators Paper

- [KDD 2024] Tutorial: Inference Optimization of Foundation Models for AI Accelerators

- [KDD 2023] Tutorial: Training Large-scale Foundation Models on Emerging AI Chips

We also collaborate more system-oriented topics like publications below

- [MLSys 2025] Marconi: Prefix Caching for the Era of Hybrid LLM Paper

- [MLSys 2025] ScaleFusion: Scalable Inference of Spatial-Temporal Diffusion Transformers for High-Resolution Long Video Generation Paper

- [MLSys 2025] FastTree: Optimizing Attention Kernel and Runtime for Tree-Structured LLM Inference Paper

If you are excited to push the boundaries of foundation model research and make impactful contributions, we would love to hear from you! We also constantly hire full-time PhDs. Please send your CV to Youngsuk Park pyoungsu@amazon.com | youngsuk@cs.stanford.edu (cc Kaan Ozkara kaanozka@amazon.com) with a short paragraph of your interest.

TSMC Global Summer Internship Program

Postdoctoral Researcher: Modeling and Stability Analysis for Modern Power Systems

Please see this link for more information regarding this position.

Researcher II/III: EMT Modeling, Simulation and Analysis for Large-Scale Power Systems

Please see this link for more information regarding this position.

Part time Project with Professor Pirouz Kavehpour

We’re seeking an expert developer for a project‑based engagement (not hourly) to build and optimize scalable applications, deploy on Azure Cloud, and integrate GPU‑accelerated embedding software. This role requires deep technical expertise, independence, and the ability to deliver high‑quality solutions within defined project milestones.

What You’ll Work On

- GPU Embedding Service (Containerized)—FastAPI (Python), ML/embeddings, Docker + NVIDIA Container Toolkit, Azure GPU (VM/AKS/ACI), CI/CD with Azure Container Registry (ACR).

- Triage Pipeline with DB & Integrations—Node.js/TypeScript (workers/cron), PostgreSQL (SQL, locks), REST API integration, Azure services (Blob, AI Search, Webhooks).

- Website Integration & Admin UI—Next.js 15 (App Router, API routes, Server Actions), TypeScript + React, PostgreSQL queries, UI/UX for job tables & status.

Requirements

- Expertise in Next.js 15, Node.js, TypeScript, React, and PostgreSQL.

- Hands‑on experience deploying to Azure (preferably using ACR, AKS/ACI, Web Apps, andmanaged services).

- Proficiency with containerized GPU workflows (Docker, NVIDIA Container Toolkit).

- Strong understanding of REST APIs, background workers/cron, SQL performance (indexes,

locks), and secure auth patterns. - Track record of independently delivering production‑grade solutions on schedule and tospec.

Nice to Have

- FastAPI and Python ML/embeddings experience.

- Azure AI Search, Blob, and Webhooks integrations.

- CI/CD pipelines (GitHub Actions or Azure DevOps).

- Admin UI/Design System experience for operational dashboards.

How We Work

Project‑based milestones, clear acceptance criteria, frequent check‑ins, and code reviews.

We value pragmatic engineering, performance, and maintainability.

Send your information to: Prof. Pirouz Kavehpour—Pirouz@seas.ucla.edu

The University of Alabama is in search of Professors

If you are completing your PhD this year (or who have recently graduated) that are interested in opportunities to continue your research careers on the tenure track, the University of Alabama is in search of an assistant professor.

The search is currently active and they’ll begin reviewing applications received through the online submission system in the next few weeks.

They are also recruiting at the Associate and Full Professor levels. Those links with further details are below:

Electrical and Computer Engineering – Professor – 527632 – Tuscaloosa, Alabama, United States

Electrical/Computer Engineering Intern at Sophia Space

A well-funded deep-tech startup in Pasadena is seeking Electrical/Computer Engineering Interns to help develop its AI-powered product prototypes. The interns are expected to blend electronics hardware and embedded software to build groundbreaking AI infrastructure in space. Roles are available full-time or part-time starting immediately, with the option to continue part-time (~10–15 hrs/week) during the academic year.

Please see this link for more information regarding this position.

Engineer or Project Manager at DMF Lighting

DMF Lighting designs and builds industry-leading LED downlighting that sets the standard for flexibility, performance, and quality. Founded over 30 years ago, DMF has grown into a leader in the lighting industry, driven by a passion for innovation and customer service.

Our in-house engineers constantly push the boundaries of lighting, delivering products that combine exceptional performance with beautiful design. At DMF, we believe in a collaborative, forward-thinking culture that empowers our team to bring creative ideas to life and make a lasting impact. If you’re looking for a company where creativity and innovation are part of the DNA, DMF is the place for you.

We currently have the following openings available:

Electrical Engineer, Sr. Electrical Engineer, Embedded Firmware Engineer, Mechanical Engineer I, Mechanical Engineer, Project Manager

Website: www.dmflighting.com

Please see this link for more information regarding these positions.

Contact: Helen Portillo, Human Resources Generalist at HPortillo@dmflighting.com

Newsletter Submissions

To be included in future newsletters, please send the latest news, awards, publications and any upcoming PhD oral defenses to the Chair’s assistant, Winda Mak, at wmak@seas.ucla.edu. Please include “newsletter submission” in the subject line. The ECE newsletters will be sent bimonthly on the first and third Mondays of the month. Please ensure all submissions are received by the Wednesday before distribution to be included in the newsletter.