Information processing with light is a topic of ever-increasing interest among optics and photonics researchers. Apart from the quest for an energy-efficient and fast alternative to electronic computing for future computing needs, this interest is also driven by emerging technologies such as autonomous vehicles, where ultrafast processing of natural scenes is of utmost importance. Since natural lighting conditions mostly involve spatially incoherent light, processing of visual information under incoherent light is crucial for various imaging and sensing applications. Additionally, state-of-the-art microscopy techniques for high-resolution imaging at the micro- and nano-scale also depend on spatially incoherent processes such as fluorescence light emission from specimens.

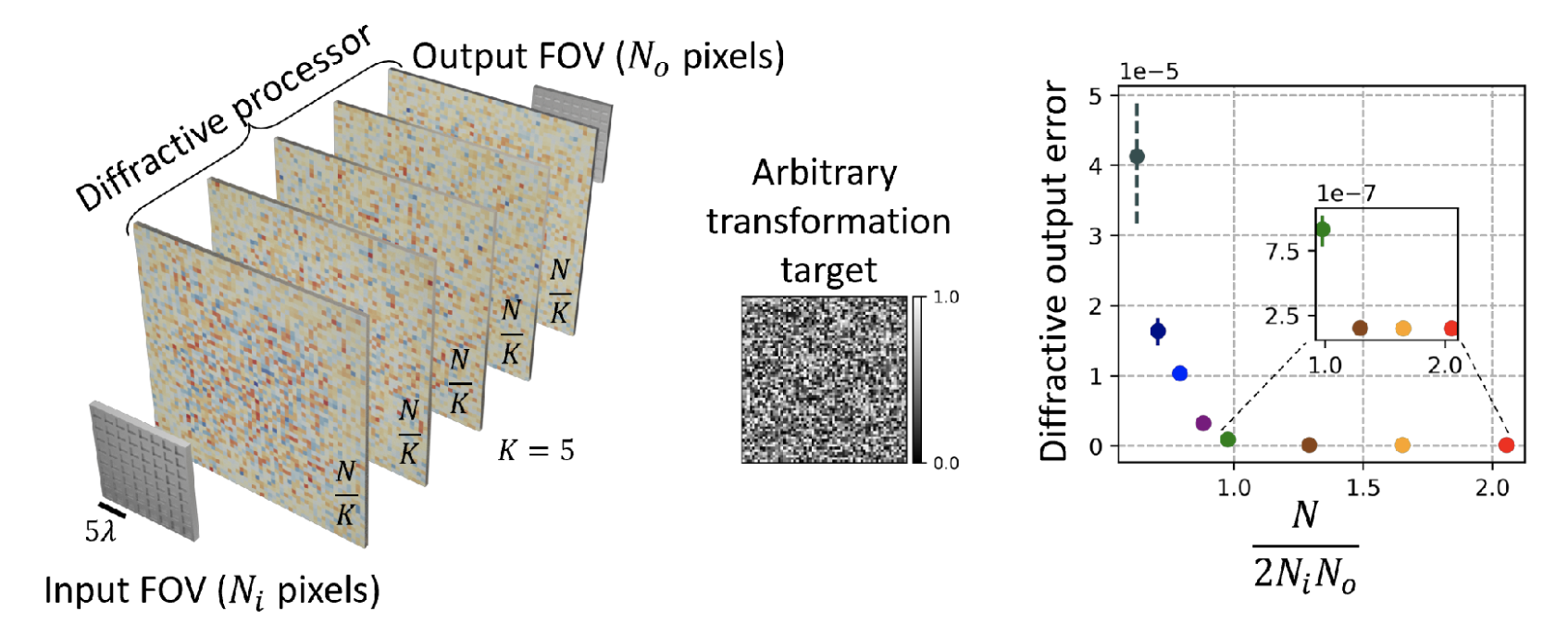

In a new article published in Light: Science & Applications, a team of researchers, led by Professor Aydogan Ozcan from the Electrical and Computer Engineering Department of the University of California, Los Angeles (UCLA), USA, has developed methods for designing all-optical universal linear processors of spatially incoherent light. Such processors comprise a set of structurally engineered surfaces and exploit successive diffraction of light by these structured surfaces to perform a desired linear transformation of the input light field without using external digital computing power.

UCLA researchers reported deep learning-based design methods to perform any arbitrary linear transformation using the optical intensity of spatially incoherent light. These diffractive optical processors, once fabricated using, for example, lithography or 3D-printing techniques, can perform an arbitrarily-selected linear transformation for any input light intensity pattern, accurately revealing at the output the correct pattern following the desired function that is learned. They also demonstrated that using spatially incoherent broadband light, it is possible to simultaneously perform multiple linear intensity transformations, with a uniquely different transformation assigned to each spatially incoherent illumination wavelength.

These findings have broad implications in numerous fields, including all-optical information processing and visual computing with spatially and temporally incoherent light, as encountered in natural scenes. Additionally, this framework holds significant potential for applications in computational microscopy and incoherent imaging with spatially varying engineered point spread functions (PSFs).

The authors of this work are Md Sadman Sakib Rahman, Xilin Yang, Jingxi Li, Bijie Bai and Aydogan Ozcan of UCLA Samueli School of Engineering. The researchers acknowledge the funding of the US Department of Energy (DOE).

See the article:

Md Sadman Sakib Rahman, Xilin Yang, Jingxi Li, Bijie Bai, Aydogan Ozcan “Universal Linear Intensity Transformations Using Spatially Incoherent Diffractive Processors”, Light: Science & Applications

https://doi.org/10.1038/s41377-023-01234-y